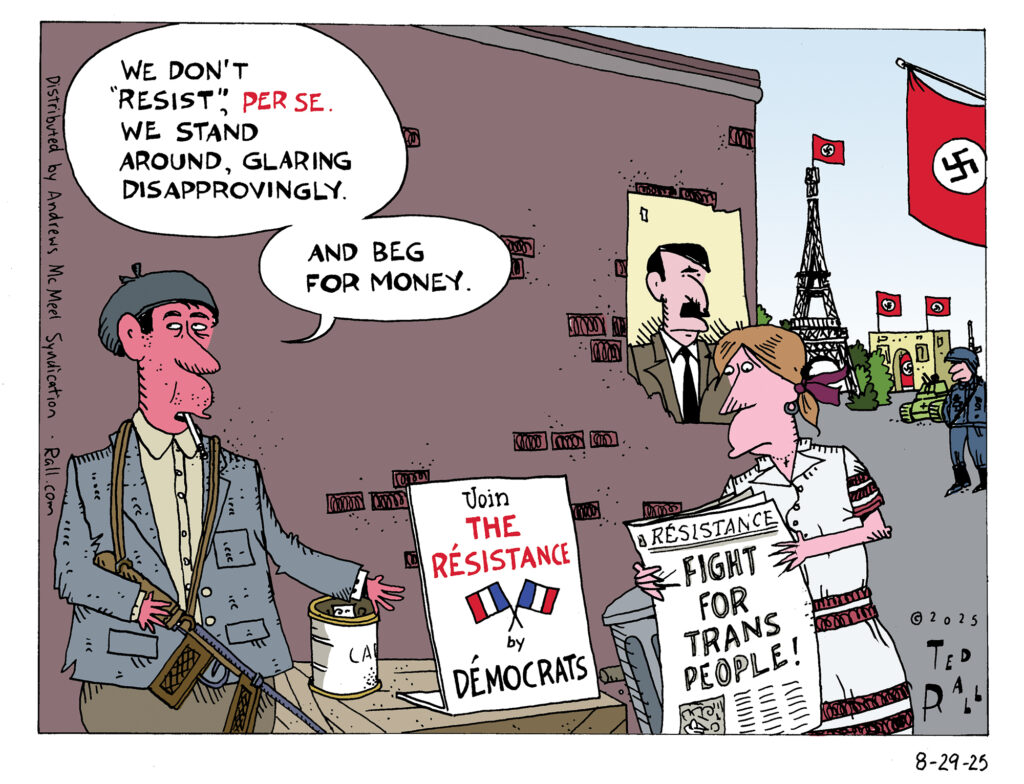

Democrats think of themselves as leading some sort of Resistance to Trump. Reality begs to differ.

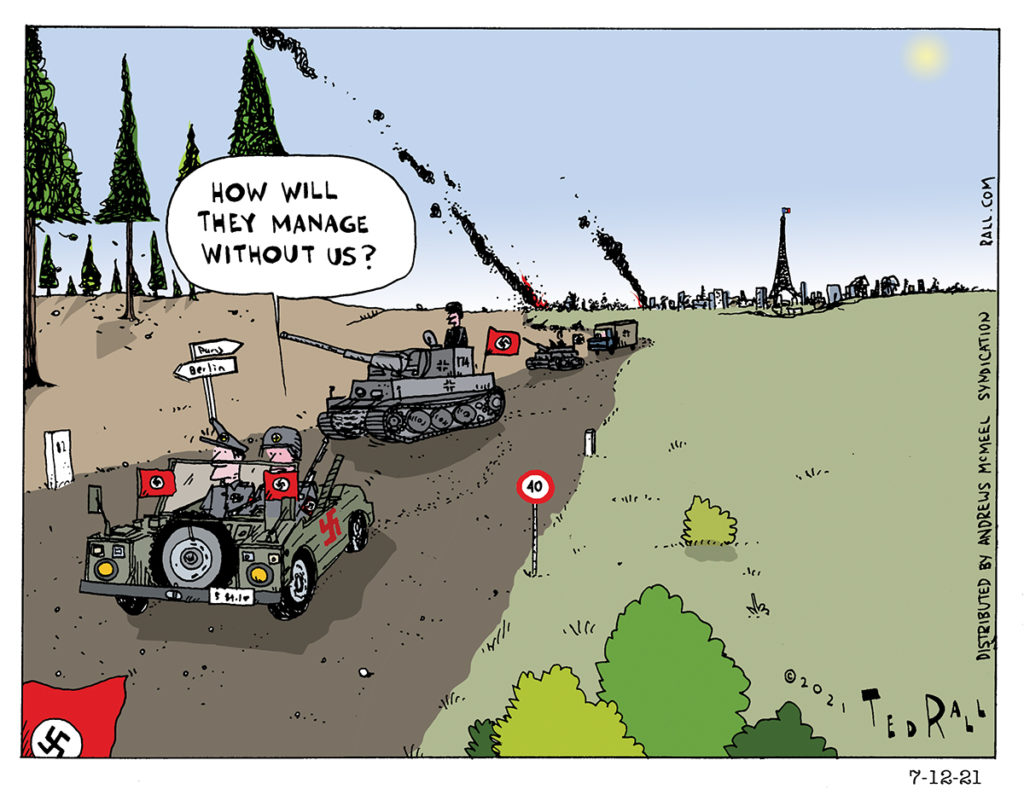

How Will They Manage without Us?

As the United States completes its pullout from Afghanistan, the usual suspects worry aloud that the country won’t be able to manage without us. What they and other people with a neo-colonialist mentality don’t realize is that Afghanistan is a sovereign country and that we have been interfering with it unnaturally for 20 years.

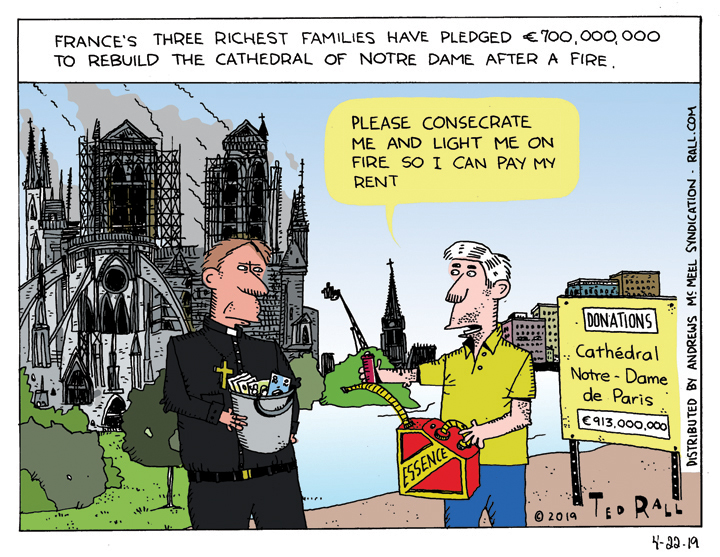

Broke? Declare Yourself a Church and Burn

After a devastating fire laid waste to much of the Cathedral of Notre Dame in Paris, three spectacularly wealthy French industrial families pledged 700 million euros to re-build the iconic structure. It was a generous gesture. But it was disconcerting that purse strings would open so quickly to repair a damaged building while so many actual living breathing human beings in France were suffering that the so-called “yellow vest movement” was rioting in the streets of Paris just a few weeks earlier.

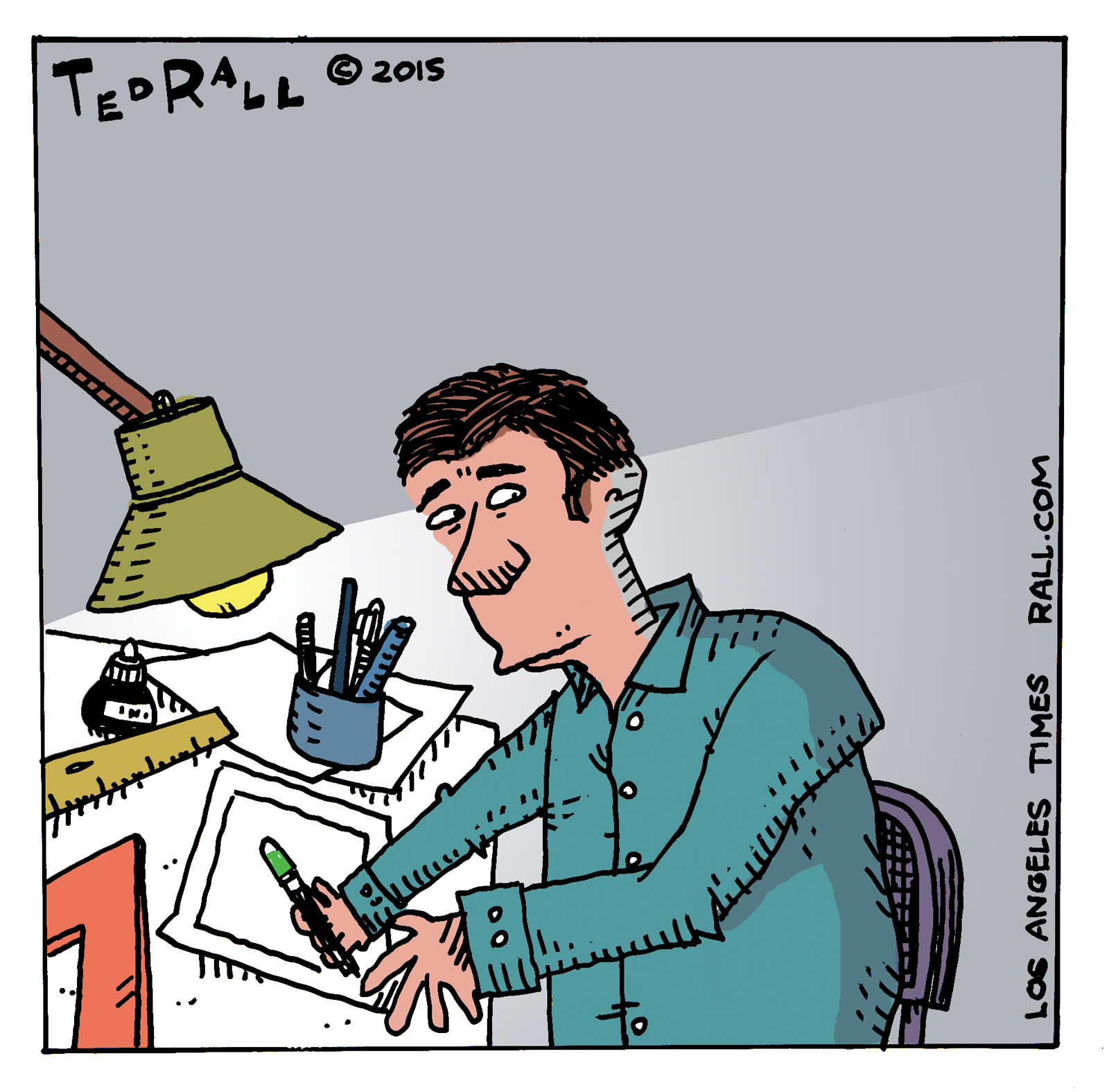

SYNDICATED COLUMN: Editors, Not Terrorists, Killed American Political Cartooning

The Charlie Hebdo massacre couldn’t have happened here in the United States. But it’s not because American newspapers have better security.

Gunmen could never kill four political cartoonists in an American newspaper office because no paper in the U.S. employs two, much less four, staff political cartoonists — the number who died Wednesday in Paris. There is no equivalent of Charlie Hebdo, which puts political cartoons front and center, in the States. (The Onion never published political cartoons — and it ceased print publication last year. MAD, for which I draw, focuses on popular culture.)

When I began drawing political cartoons professionally in the early 1990s, hundreds of my colleagues worked on staff at newspapers, with full salaries and benefits. That was already down from journalism’s mid-century glory days, when there were thousands. Many papers employed two. Shortly after World War II, The New York Times, which today has none, employed four cartoonists on staff. Today there are fewer than 30.

Most American states have zero full-time staff political cartoonists.

Many big states — California, New York, Texas, Illinois — have one.

No American political magazine, on the left, center or right, has one.

No American political website (Huffington Post, Talking Points Memo, Daily Kos, Slate, Salon, etc.) employs a political cartoonist. Although its launch video was done in cartoons, eBay billionaire Pierre Omidyar’s new $250 million left-wing start-up First Look Media refuses to hire political cartoonists — or pay tiny fees to reprint syndicated ones.

These outfits have tons of staff writers.

During the last few days, many journalists and editors have spread the “Je Suis Charlie” meme through social media in order to express “solidarity” with the victims of Charlie Hebdo, political cartoonists (who routinely receive death threats, whether they live in France or the United States) and freedom of expression. That’s nice.

No it’s not.

It’s annoying.

As far as political cartoonists are concerned, editorials pledging “solidarity” with the Charlie Hebdo cartoonists is an empty gesture — corporate slacktivism. Less than 24 hours after the shootings at Charlie Hebdo, the Fort Lauderdale Sun-Sentinel fired its long-time, award-winning political cartoonist, Chan Lowe.

Political cartoonists: editors love us when we’re dead. While we’re still breathing, they’re laying us off, slashing our rates, stealing our copyrights and disappearing us from where we used to appear — killing our art form.

American editors and publishers have never been as willing to publish satire, whether in pictures or in words, as their European counterparts. But things have gone from bad to apocalyptic in the last 30 years.

Humor columnists like the late Art Buchwald earned millions syndicating their jokes about politicians and current events to American newspapers through the 1970s and 1980s. Miami Herald humor writer Dave Barry was a rock star through the 1990s, routinely cranking out bestselling books. Then came 9/11.

When I began working as an executive talent scout for the United Media syndicate in 2006, my sales staff informed me that, if Barry had started out then, they wouldn’t have been able to sell him to a single newspaper, magazine or website — not even if they gave his work to them for free. Barry was still funny, but there was no market for satire anywhere in American media.

That’s even truer today.

The youngest working political cartoonist in the United States, Matt Bors, is 31. When people ask me who the next up-and-comer is, I tell them there isn’t one — and there won’t be one any time soon.

Americans are funny. Americans like funny. They especially like wicked funny. We’re so desperate for funny that we think Jon Stewart is hilarious. (But…Richard Pryor. He really was.) But editors and producers won’t give them funny, much less mean-funny.

Why not?

Like any other disaster, media censorship of satire — especially graphic satire — in the U.S. is caused by several contributing factors.

Most media outlets are owned by corporations, not private owners. Publicly-traded companies are risk-averse. Executives prefer to publish boring/safe content that won’t generate complaints from advertisers or shareholders, much less force them to hire extra security guards.

Half a century ago, many editors had working-class backgrounds and rose through the ranks from the bottom. Now they’re graduates of pricey graduate university journalism programs that don’t offer scholarships — and don’t teach a single class about comics, cartoons, humor or graphic art. It takes an unusually curious editor to make the effort to educate himself or herself about political cartoons.

Corporate journalism executives view cartoons as frivolous, less serious than “real” commentary like columns or editorials. Unfortunately, some editorial cartoonists make this problem worse by drawing silly gags about current events (as opposed to trenchant attacks on the powers that be) because they’ve seen their blandest work win Pulitzers and coveted spots in the major weekend cartoon “round-ups.” When asked to cut their budget, editors often look at their cartoonist first.

There is still powerful political cartooning online. Ironically, the Internet contributes to the death of satire in America by sating the demand for hard-hitting political art. Before the Web, if a paper canceled my cartoons they would receive angry letters from my fans. Now my readers find me online — but the Internet pays pennies on the print dollar. I’m stubbornly hanging on, but many talented cartoonists, especially the young, won’t work for free.

It’s not that media organizations are broke. Far from it. Many are profitable. American newspapers and magazines employ tens of thousands of writers — they just don’t want anyone writing or drawing anything that questions the status quo, especially not in a form as powerful as political cartooning.

The next time you hear editors pretending to stand up for freedom of expression, ask them if they employ a cartoonist.

(Ted Rall, syndicated writer and cartoonist for The Los Angeles Times, is the author of the new critically-acclaimed book “After We Kill You, We Will Welcome You Back As Honored Guests: Unembedded in Afghanistan.” Subscribe to Ted Rall at Beacon.)

COPYRIGHT 2015 TED RALL, DISTRIBUTED BY CREATORS.COM

Special to The Los Angeles Times: Political Cartooning is Almost Worth Dying For

Originally published by The Los Angeles Times:

An event like yesterday’s slaughter of at least 10 staff members, including four political cartoonists, and two policemen, at the office of Charlie Hebdo newspaper in Paris, elicits so many responses that it’s hard to sort them out.

If you have a personal connection, that comes first.

I do.

I met a group of Charlie Hebdo cartoonists, including one of the victims, a few years ago at the annual cartoon Festival in Angoulême, France, the biggest gathering of cartoonists and their fans in the world. They had sought me out, partly as fans of my work – for whatever reason, my stuff seems to travel well overseas – and because I was an American cartoonist who speaks French. We did what cartoonists do: we got drunk, complained about our editors, exchanged trade secrets including pay rates.

If I lived in France, that’s where I’d want to work.

My French counterparts struck me as more self-confident and cockier than the average cartoonist. Unlike at the older, venerable Le Canard Enchainée, cartoons are the centerpiece of Charlie Hebdo, not prose. The paper has suffered financial troubles over the years, yet somehow the French continued to keep it afloat because they love comics.

Here’s how much France values graphic satire:

- More full-time staff political cartoonists were killed in Paris yesterday than are employed at newspapers in the states of California, Texas and New York combined.

- More full-time staff cartoonists were killed in Paris yesterday than work at all American magazines and websites combined.

The Charlie Hebdo artists knew they were working at a place that not only allows them to push the envelope, but encourages it. Hell, they didn’t even tone things down after their office got bombed.

They weren’t paid much, but they were having fun. The last time that I met print journalists as punk rock as those guys, they were at the old Spy magazine.

They would definitely want that attitude to outlive them.

Next comes the “there but for the grace of God” reaction.

Every political cartoonist receives threats. After 9/11 especially, people promised to blow me up with a bomb, slit the throats of every member of my family, rape me, and deprive me of a livelihood by organizing sketchy boycott campaigns. (That last one almost worked.)

The most chilling came from a New York police officer, a sergeant, who was so careless and/or unconcerned about getting in trouble that his caller ID popped up.

Who was I going to call to complain? The cops?

As far as I know, no editorial cartoonist has been murdered in response to the content of his or her work in the United States, but there’s a first time for everything. Political cartoonists have been killed and brutally beaten in other countries. Here in the United States, the murder of an outspoken radio talkshow host reminds us that political murder isn’t something that only takes place somewhere else.

Every political cartoonist takes a risk to exercise freedom expression.

We know that our work, strident and opinionated, makes a lot of people very angry, and that we live in a country where a lot of people have a lot of guns. Whether you work in a newspaper office guarded by a minimum wage security guard or, as is increasingly the norm, in your own home, you are always one pull of a trigger away from death when you hit “send” to fire off your cartoon to your syndicate, blog or publication.

Which brings me to my big-picture reaction to yesterday’s horror:

Cartoons are incredibly powerful.

Not to denigrate writing (especially since I do a lot of it myself), but cartoons elicit far more response from readers, both positive and negative, than prose. Websites that run cartoons, especially political cartoons, are consistently amazed at how much more traffic they generate than words. I have twice been fired by newspapers because my cartoons were too widely read — editors worried that they were overshadowing their other content.

Scholars and analysts of the form have tried to articulate exactly what it is about comics that make them so effective at drawing an emotional response, but I think it’s the fact that such a deceptively simple art form can pack such a wallop. Particularly in the political cartoon format, nothing more than workaday artistic chops and a few snide sentences can be enough to cause a reader to question his long-held political beliefs, national loyalties, even his faith in God.

That drives some people nuts.

Think of the rage behind the gunmen who invaded Charlie Hebdo’s office yesterday, and that of the men who ordered them to do so. It’s too early to say for sure, but it’s a fair guess that they were radical Islamists. I’d like to ask them: how weak is your faith, how lame a Muslim must you be, to allow yourself to be reduced to the murder of innocents, over ink on paper colorized in Photoshop? In a sense, they were victims of cartoon derangement syndrome, the same affliction that led to the burning of embassies over the Danish Mohammed cartoons, the repeated outrage over The New Yorker’s insipid yet controversial covers, and that NYPD sergeant in Brooklyn who called me after he read my cartoon criticizing the invasion of Iraq.

Political cartooning in the United States gets no respect. I was thinking about that this morning when I heard NPR’s Eleanor Beardsley call Charlie Hebdo “gross” and “in poor taste.” (I should certainly hope so! If it’s in good taste, it ain’t funny.) It was a hell of a thing to say, not to mention not true, while the bodies of dead journalists were still warm. But these were cartoonists, and therefore unworthy of the same level of decorum that a similar event at, say, The Onion – which mainly runs words – would merit.

But no matter. Political cartooning may not pay well, or often at all, and media elites can ignore it all they want. (Hey book critics: graphic novels exist!) But it matters.

Almost enough to die for.

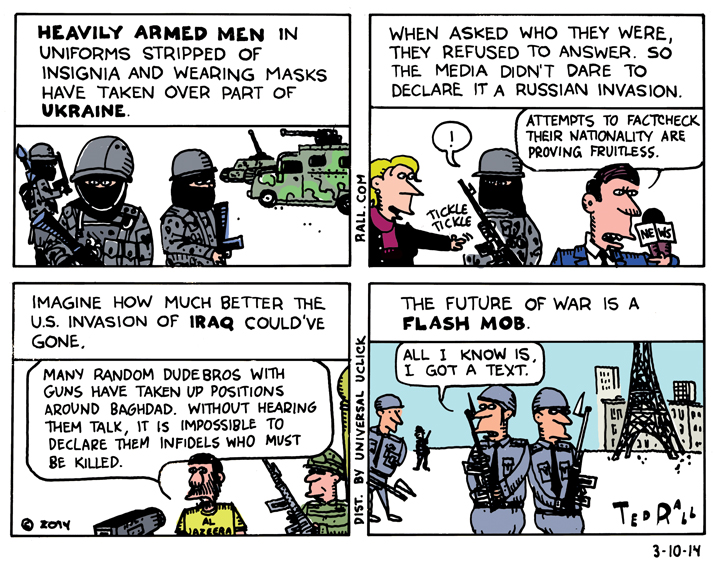

Flash War

Heavily-armed men who took over Crimea last week refused to say who they were, so foreign media outlets dutifully refused to accuse Russia of invading Ukraine until after it had happened. Imagine how much better the invasion of Iraq would have gone if nobody had been able to blame the United States for it?

AL JAZEERA COLUMN: Libya: The triumphalism of the US media

Obama and the US media are taking credit for Gaddafi’s downfall, but it was the Libyan fighters who won the war.

The fall of Moammar Gaddafi was a Libyan story first and foremost. Libyans fought, killed and died to end the Colonel’s 42-year reign.

No doubt, the U.S. and its NATO proxies tipped the military balance in favor of the Benghazi-based rebels. It’s hard for any government to defend itself when denied the use of its own airspace as enemy missiles and bombs blast away its infrastructure over the course of more than 20,000 sorties.

Still, it was Libyans who took the biggest risks and paid the highest price. They deserve the credit. From a foreign policy standpoint, it behooves the West to give it to them. Consider a parallel, the fall 2001 bombing campaign against the Taliban. With fewer than a thousand Special Forces troops on the ground in Afghanistan to bribe tribal leaders and guide bombs to their targets, the U.S. military and CIA relied exclusively on air power to allow the Northern Alliance to advance. The premature announcement that major combat operations had ceased, followed by the installation of Hamid Karzai as de facto president—a man widely seen as a U.S. figurehead—set the stage for what would eventually become America’s longest war.

As did the triumphalism of the U.S. media, who treated the “defeat” (more like the dispersing) of the Taliban as Bush’s victory. The Northern Alliance was a mere afterthought, condescended to at every turn by the punditocracy. To paraphrase Bush’s defense secretary Donald Rumsfeld, the U.S. went to war with the ally it had, not the one it would have liked to have had. America’s attitude toward Karzai and his government reflected that in many ways: snipes and insults, including the suggestion that the Afghan leader was mentally ill and ought to be replaced, as well as years of funding levels too low to meet payroll and other basic needs, thus limiting its power to metro Kabul and a few other major cities. In retrospect it would have been smarter for the U.S. to have graciously credited (and funded) the Northern Alliance with its defeat over the Taliban, content to remain the power behind the throne.

Despite this experience in Afghanistan “victory” in Libya has prompted a renewal of triumphalism in the U.S. media.

Like a slightly drunken crowd at a football match giddily shouting “U-S-A,” editors and producers keep thumping their chests long after it stops being attractive.

When Obama announced the anti-Gaddafi bombing campaign in March, Stephen Walt issued a relatively safe pair of predictions. “If Gaddafi is soon ousted and the rebel forces can establish a reasonably stable order there, then this operation will be judged a success and it will be high-fives all around,” Walt wrote in Foreign Policy. “If a prolonged stalemate occurs, if civilian casualties soar, if the coalition splinters, or if a post-Gaddafi Libya proves to be unstable, violent, or a breeding ground for extremists…his decision will be judged a mistake.”

It’s only been a few days since the fall of Tripoli, but high-fives and victory dances abound.

“Rebel Victory in Libya a Vindication for Obama,” screamed the headline in U.S. News & World Report.