Seeing is believing. In the age of AI, it shouldn’t be.

In June, for example, Ron DeSantis’ presidential campaign issued a YouTube ad that used generative artificial-intelligence technology to produce a deep-fake image of former President Donald Trump hugging appearing to hug Dr. Anthony Fauci, the former COVID-19 czar despised by anti-vax and anti-lockdown Republican voters. Video of Elizabeth Warren has been manipulated to make her look as though she was calling for Republicans to be banned from voting. She wasn’t. As early as 2019, a Malaysian cabinet minister was targeted by a AI-generated video clip that falsely but convincingly portrayed him as confessing to having appeared in a gay sex video.

Ramping up in earnest with the 2024 presidential campaign, this kind of chicanery is going to start happening a lot. And away we go: “The Republican National Committee in April released an entirely AI-generated ad meant to show the future of the United States if President Joe Biden is re-elected. It employed fake but realistic, photos showing boarded up storefronts, armored military patrols in the streets, and waves of immigrants creating panic,” PBS reported.

“Boy, will this be dangerous in elections going forward,” former Obama staffer Tommy Vietor told Vanity Fair.

Like the American Association of Political Consultants, I’ve seen this coming. My 2022 graphic novel The Stringer depicts how deep-fake videos and other falsified online content of political leaders might even cause World War III. Think that’s an overblown fear? Think again. Remember how residents of Hawaii jumped out of their cars and jumped down manholes after state authorities mistakenly issued a phone alert of an impending missile strike? Imagine how foreign officials might respond to a high-quality deep-fake video of, for example, President Joe Biden declaring war on North Korea or of Israeli Prime Minister Benjamin Netanyahu seeming to announce an attack against Iran. What would you do if you were a top official in the DPRK or Iranian governments? How would you determine whether the threat were real?

Here in the U.S., generative-AI-created political content could will stoke racial, religious and partisan hatred that could lead to violence, not to mention interfering with elections.

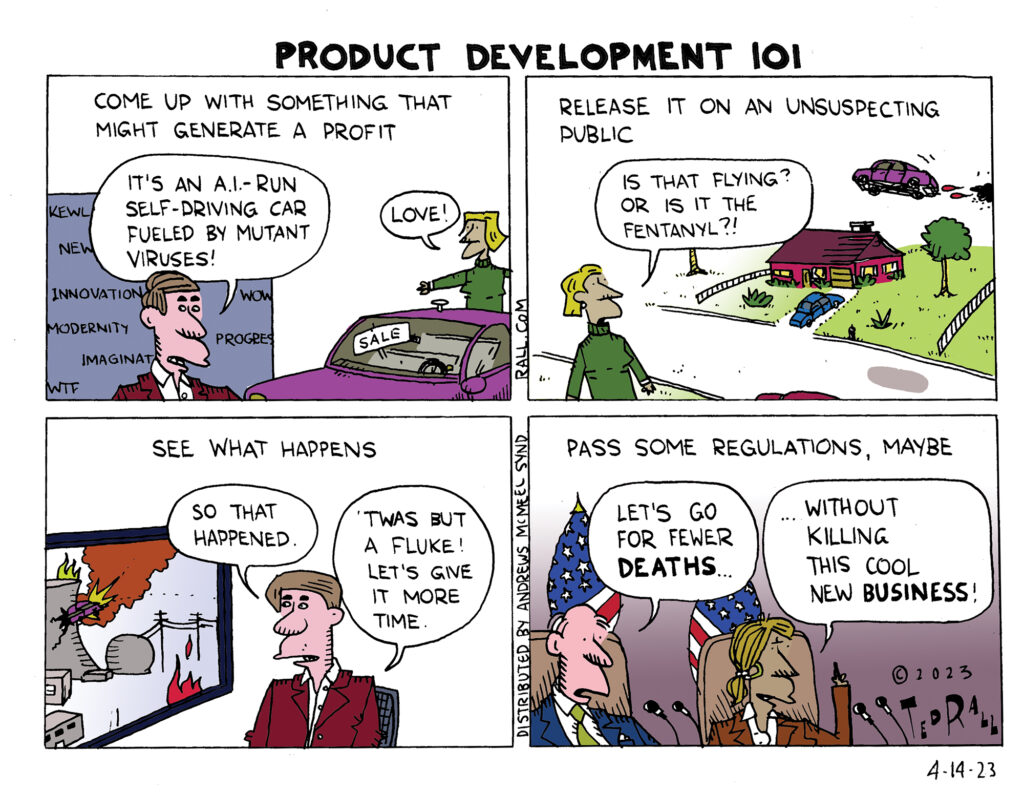

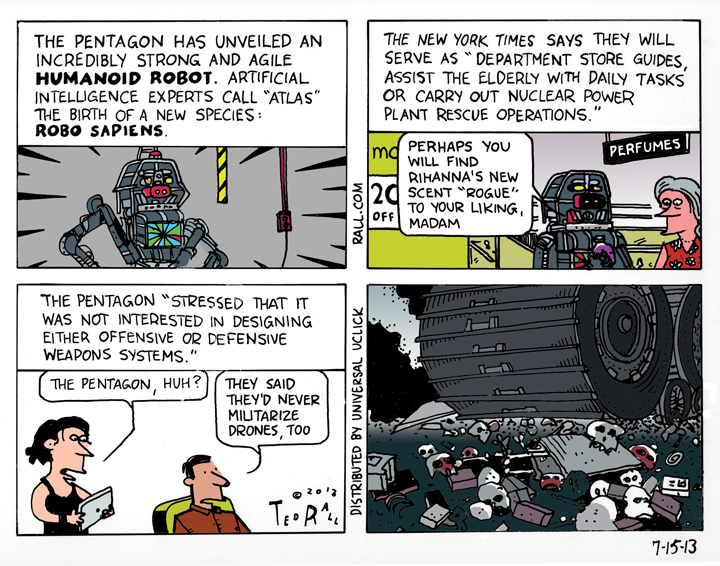

Private industry and government regulators understand the danger. So far, however, proposed safeguards fall way short of what would be needed to ensure that the vast majority of political content is what it seems to be.

The Federal Election Committee has barely begun to consider the issue. The real action so far, such as it is, has been on the Silicon Valley front. “Starting in November, Google will mandate all political advertisements label the use of artificial intelligence tools and synthetic content in their videos, images and audio,” Politico reports. “Google’s latest rule update—which also applies to YouTube video ads—requires all verified advertisers to prominently disclose whether their ads contain ‘synthetic content that inauthentically depicts real or realistic-looking people or events.’ The company mandates the disclosure be clear and conspicuous’ on the video, image or audio content. Such disclosure language could be ‘this video content was synthetically generated,’ or ‘this audio was computer generated,’ the company said.”

Labeling will be useless and ineffective. Synthetic content that deep-fakes the appearance of a politician or a group of people doing, or saying something that they actually never did or said sticks in people’s minds even after they’ve been informed that it’s wrong—especially when the material confirms or fits with viewers’ pre-existing assumptions and world views.

The only solution is to make sure they are never seen at all. AI-generated deep fakes of political content should be banned online, whether with or without a warning label.

The culprit is the “illusory truth effect” of basic human psychology: once you have seen something, you can’t unsee it—especially if it’s repeated. Even after you are told that something you’ve seen was fake and to disregard it, it continues to influence you as if you still took it at face value. Trial lawyers are well aware of this phenomenon, which is why they knowingly make arguments and allegations that are bound to be ordered stricken by a judge from the court record; jurors have heard it, they assume there’s at least some truth to it, and it affects their deliberations.

We’ve seen how pernicious misinformation like the Russiagate hoax and Bush’s lie that Saddam was aligned with Al Qaeda can be—over a million people dead—and how such falsehoods retain currency long after they’ve been debunked. Typical efforts to correct the record, like “fact-checking” news sites, are ineffective and sometimes even serve to reinforce the falsehood they’re attempting to correct or undermine. And those examples are ideas expressed through mere words.

Real or fake, a picture speaks more loudly than a thousand words. False visuals are even more powerful than falsehoods expressed through prose. Even though there is no contemporaneous evidence that any Vietnam War veteran was ever accosted by antiwar protesters who spit on them, many Vietnam vets began to say it had happened to them—after they viewed Sylvester Stallone’s monologue in the movie “Rambo: First Blood,” which was likely intended as a metaphor. Yet, throughout the late 1970s, no vet ever made such a claim, even in personal correspondence. They probably even believe it; they “remember” what never occurred.

Warning labels can’t reverse the powerful illusory truth effect. Moreover, there is nothing to stop someone from reproducing and distributing a properly-warning-labeled deep-fake AI-generated campaign attack ad, stripped of any indication that the content isn’t what it seems.

AI is here to stay. So are bad actors and scammers. Particularly in the political space, First Amendment-guaranteed free speech must be protected. But thoughtful government regulation of AI, with strong enforcement mechanisms including meaningful penalties, will be essential if we want to avoid chaos and worse.

(Ted Rall (Twitter: @tedrall), the political cartoonist, columnist and graphic novelist, co-hosts the left-vs-right DMZ America podcast with fellow cartoonist Scott Stantis. You can support Ted’s hard-hitting political cartoons and columns and see his work first by sponsoring his work on Patreon.)